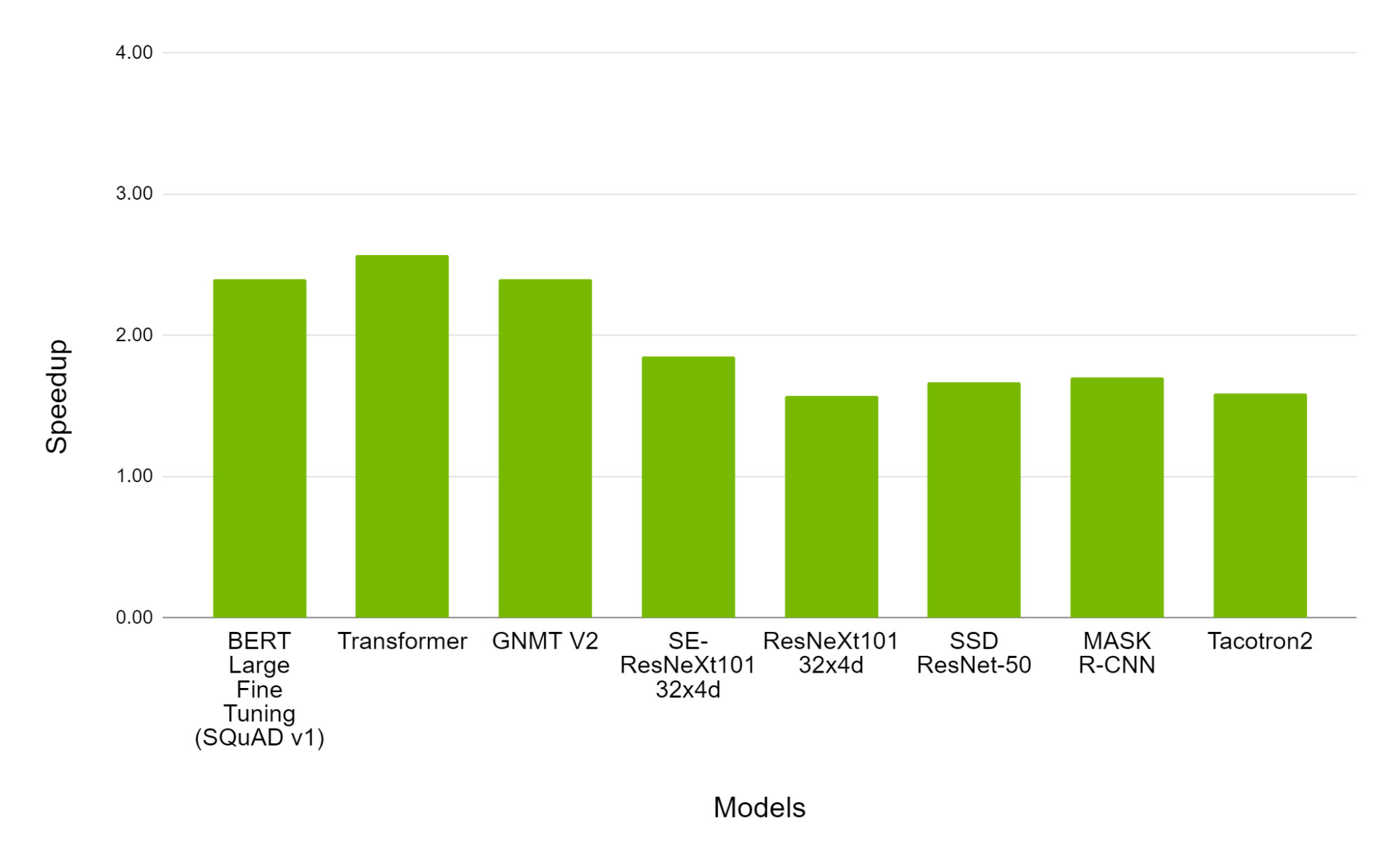

Revisiting Volta: How to Accelerate Deep Learning - The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores

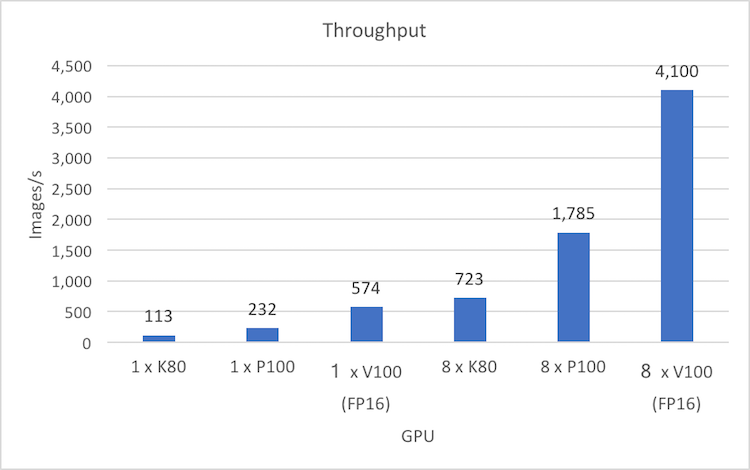

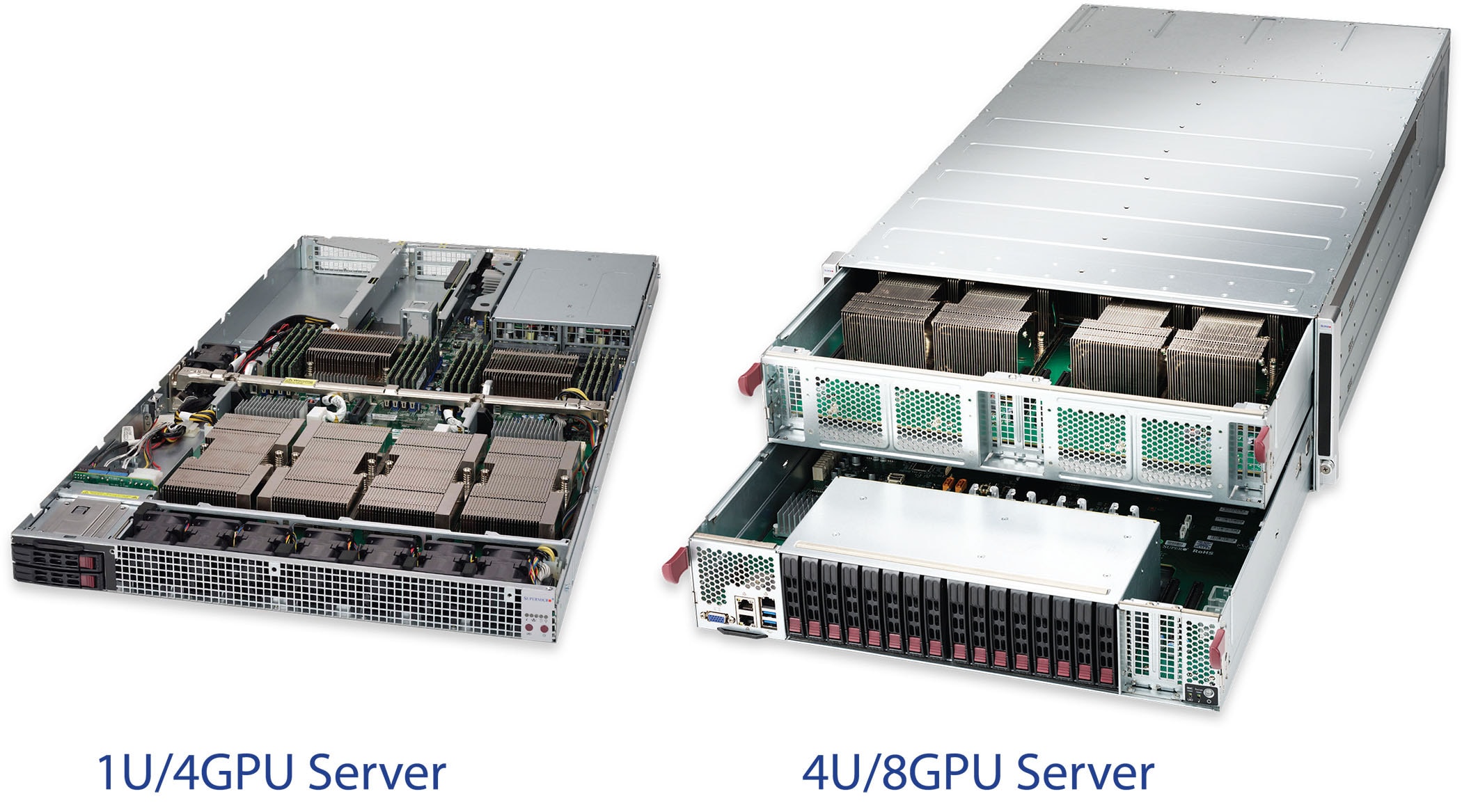

Supermicro | News | Supermicro Systems Deliver 170 TFLOPS FP16 of Peak Performance for Artificial Intelligence, and Deep Learning, at GTC 2017

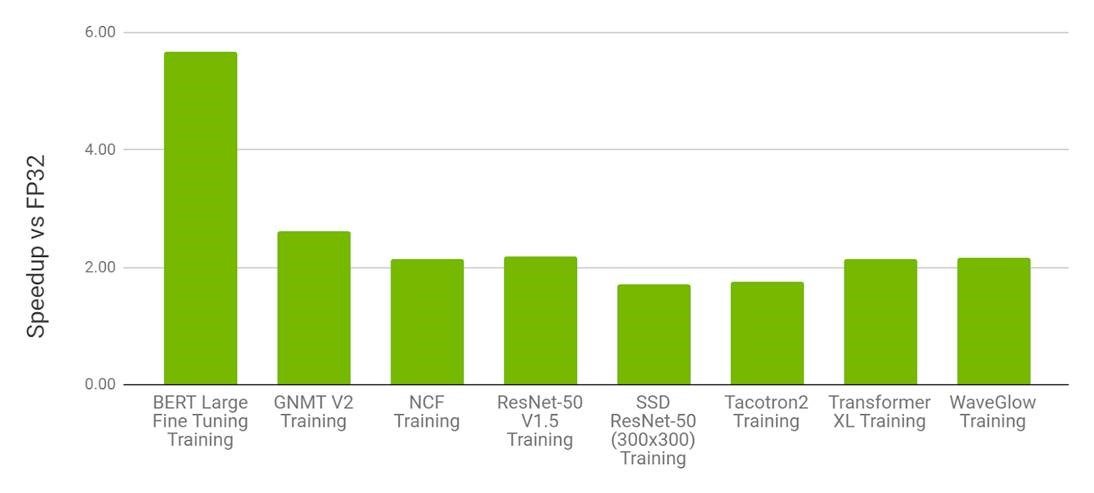

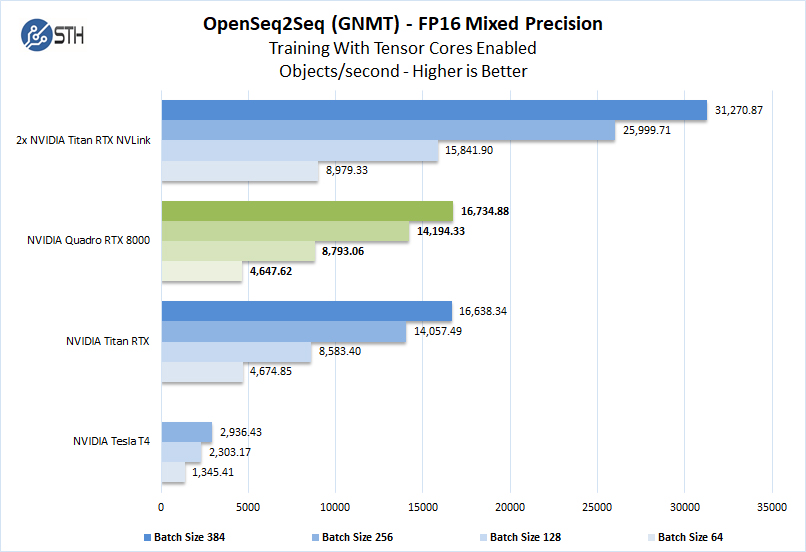

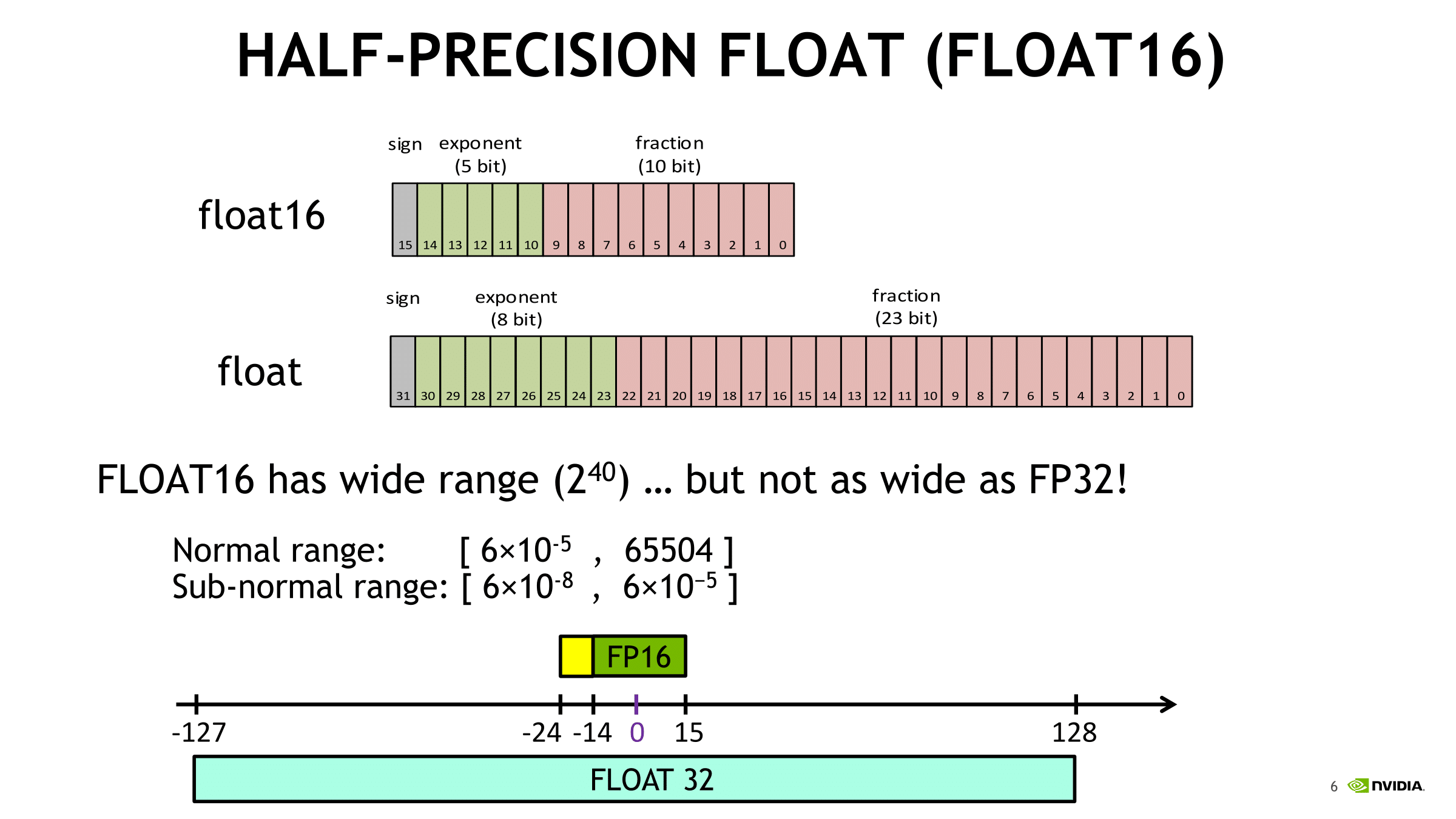

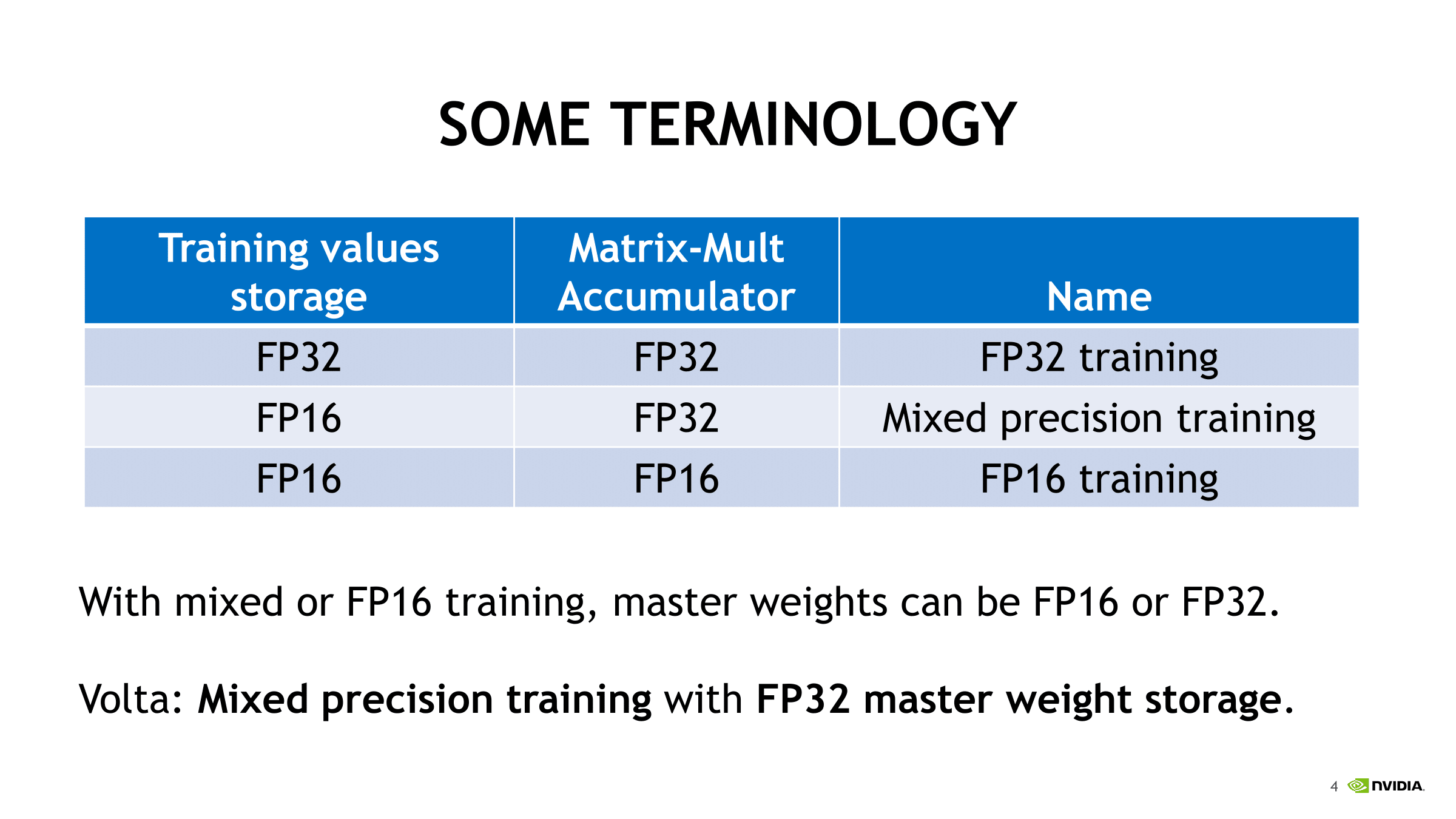

Revisiting Volta: How to Accelerate Deep Learning - The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores

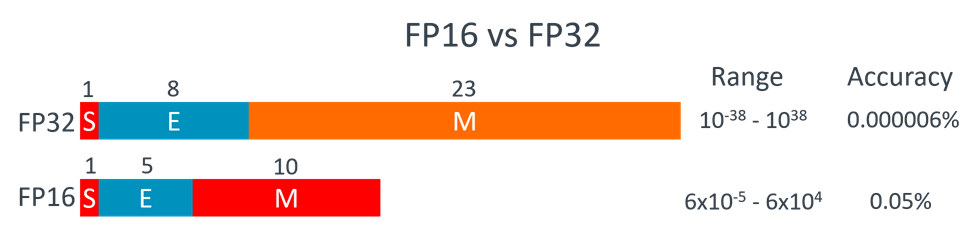

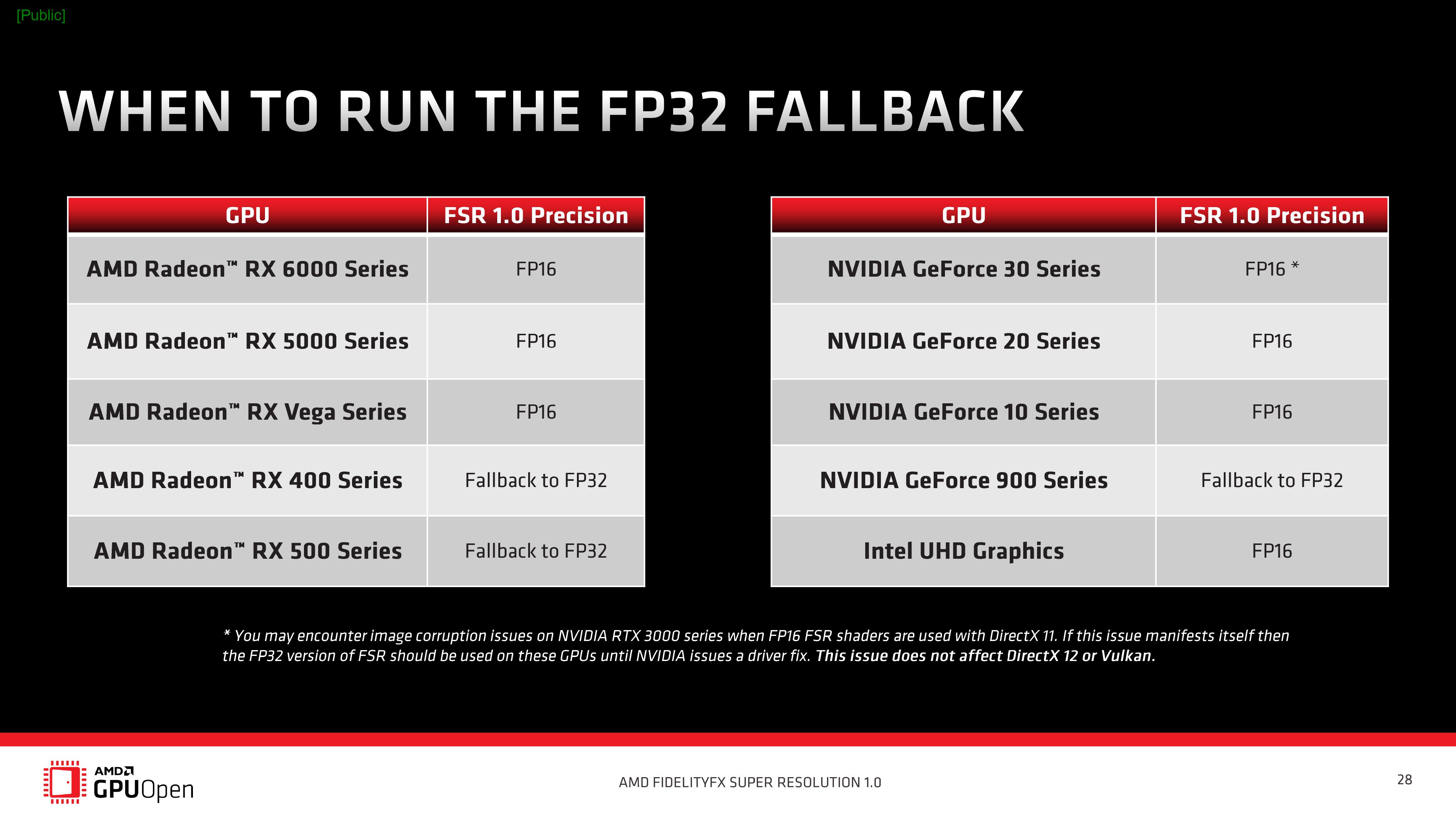

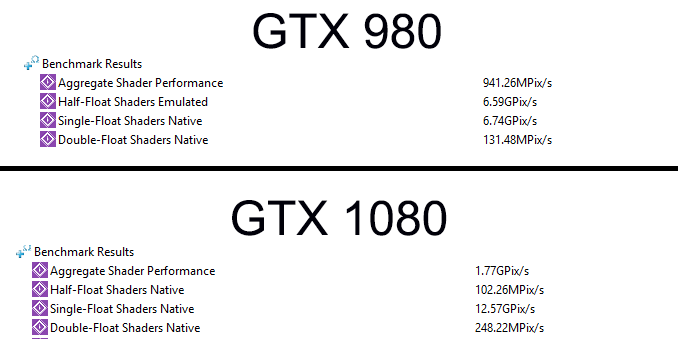

FP16 Throughput on GP104: Good for Compatibility (and Not Much Else) - The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

Nvidia Unveils Pascal Tesla P100 With Over 20 TFLOPS Of FP16 Performance - Powered By GP100 GPU With 15 Billion Transistors & 16GB Of HBM2

Nvidia Unveils Pascal Tesla P100 With Over 20 TFLOPS Of FP16 Performance - Powered By GP100 GPU With 15 Billion Transistors & 16GB Of HBM2

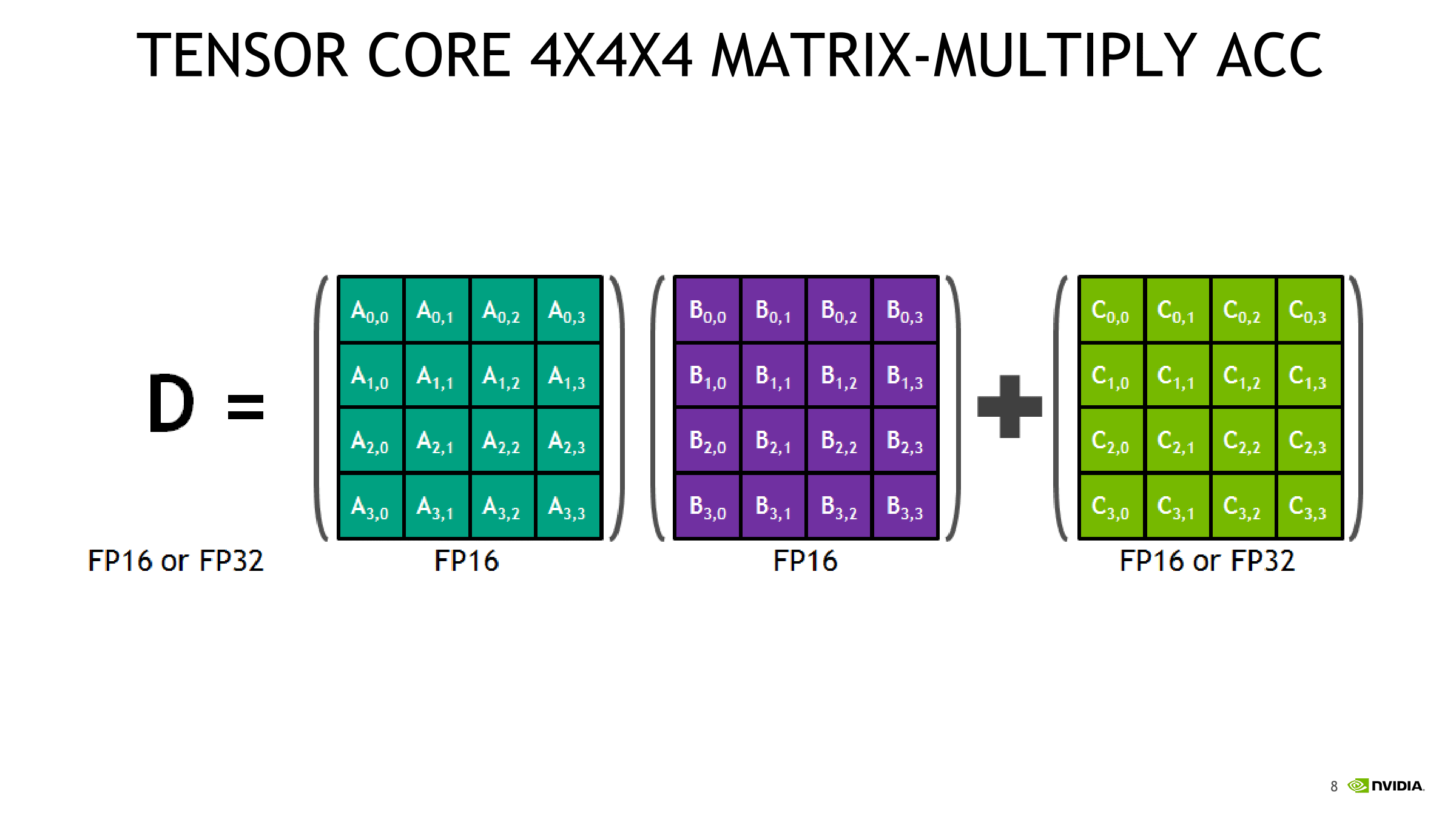

A Shallow Dive Into Tensor Cores - The NVIDIA Titan V Deep Learning Deep Dive: It's All About The Tensor Cores